This paper uses 3D joint data captured by Vicon or Kinect sensors as input, and the predicted quality scores of rehabilitation movements as output. Video information is processed by the Frame Topology Fusion (FTF) block, which builds upon the multi-order adjacency matrix \(\mathscr {A}\). Specifically, it integrates the information matrix \(\mathscr {A}_{end}\) from distant motion nodes to construct a learnable feature matrix for each motion frame. Subsequently, this information is fed into a hierarchical temporal convolutional layer, where different temporal convolution branches extract motion features from varying temporal ranges. The fused information from these temporal layers is then processed by an attention module, enabling the network to focus more effectively on information relevant to limb movements. Finally, deep motion features are extracted through Long Short-Term Memory (LSTM) layers and fully connected layers to produce the output results. Figure 2 illustrates the network architecture of our proposed FTF-HGCN, which consists mainly of the FTF blocks and the Hierarchical Temporal Convolution Attention (HTCA) module.

(a) The architecture of frame topology fusion hierarchical graph convolution network (FTF-HGCN). (b) Frame topology fusion (FTF) block. (c) Attention aggregation module (AAM). The network module takes 3D joints as input and processes them through the architecture. Subsequently, a fully connected layer outputs the final score, enabling the quality assessment of rehabilitation motions.

Preliminaries

In this study, the proposed graph-based video data processing model targets motion analysis and feature extraction, systematically handling input video data. The model first defines video input as \(O=O_1,O_2,\ldots ,O_n)\), where n denotes the total number of collected videos, and each video \(O_i\) is further decomposed into frame-level data. Specially, the j-th frame \(f_j\) has the same dimension as \(R^{(V,C)}\) to collectively forming the spatial feature representation, where V is the point set of human joint nodes and C is the feature dimension of each node. To capture dynamic interactions between nodes within a frame, we construct a graph structure \(G = (V,E,X)\), where E represents the connectivity relationships between nodes. \(X \in R^{(T,V,C)}\) serves as node attributes that encapsulate temporal skeletal data, where T indicates temporal length. Additionally, the adjacency matrix \(A_{ij}\) quantifies the spatial dependencies between the i-th and j-th nodes. In the data processing pipeline, the input image sequences undergo feature extraction and transformation via the graph convolutional operation \(Y = {\mathscr {A}_k}X{W_k}\), where \(\mathscr {A}_k\) is the adjacency matrix, X is the input feature matrix, and \(W_k\) is a learnable weight matrix. Through this combination of graph topology and convolution operations, the model systematically analyzes temporal features and spatial relationships among nodes, establishing a solid theoretical and computational framework for subsequent motion-related tasks.

Frame topology fusion block (FTF block)

Topology construction

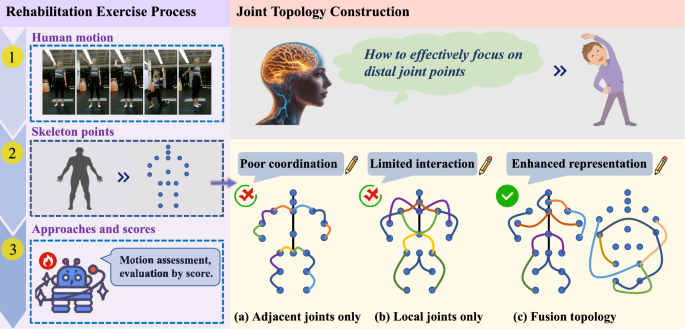

Building upon the multi-order neighborhood topology matrix \(\mathscr {A}\), we establish informational connections for distal motion joints through a manually designed approach. The nodes of distal movement amplitudes N are selected based on the following formula:

$$\begin{aligned} dis(n)\le 1,\quad n\in N, \end{aligned}$$

(1)

where limb-end nodes designed as primary distal nodes, dis() denotes the distance from a given node to the nearest primary distal node. We designate limb-end nodes as primary distal nodes, satisfying \(dis=0\), while the secondary distal nodes are defined by \(dis=1\). The set N comprises both primary and secondary distal nodes. Next, to obtain the edge set \(\varepsilon\) of the distal nodes, we construct the self-connection feature edges \(\varepsilon _{sc}\) and the fully connected feature edges \(\varepsilon _{fc}\) for the distal nodes. \(\varepsilon _{sc}\) is formed by the self-connections of the distal nodes. For the construction of \(\varepsilon _{fc}\), we loop through each distal node and pass it to the edge selector, which sequentially establishes connections between the given distal node and other distal nodes. The establishment of fully connected feature edges \(\varepsilon _{fc}\) is described by Eq. (2).

$$\begin{aligned} \varepsilon _{fc}[i][j]= \begin{Bmatrix} 0,\quad i\not \in {N}\vee j\not \in {N} \\ 1,\quad i\in {N}\wedge j\in {N} \end{Bmatrix}\end{aligned}$$

(2)

The final edge set of the distal nodes is obtained through the following formula:

$$\begin{aligned} \varepsilon =\varepsilon _{sc}\cup \varepsilon _{fc} \end{aligned}$$

(3)

Finally, the graph generator constructs the feature map based on the connection characteristics of the distal edges through the following formula:

$$\begin{aligned} \mathscr {A}_{g}=sum(g,\dim =1)\times \left((1-I)\odot g+(I\odot g)^{-1}\right) \end{aligned}$$

(4)

After standardizing the resulting feature maps of \(\varepsilon _{sc}\) and \(\varepsilon _{fc}\), they are concatenated to obtain the final output:

$$\begin{aligned} \mathscr {A}_{end}=\left\| \mathscr {A}_{g},(\textrm{g}\in G,G=g_{sc}\vee g_{fc})\right\| \end{aligned}$$

(5)

where \(g_{sc}\) and \(g_{fc}\) are the feature maps formed by \(\varepsilon _{sc}\) and \(\varepsilon _{fc}\), respectively. I is the identity matrix and \(\odot\) denotes the Hadamard product. \(\mathscr {A}_{g}\) is the topological matrix corresponding to the feature map formed by the distal edge set.

Frame topology fusion

The constructed fusion topological structure is applied to each action frame, and a learnable feature matrix is established for this structure, as shown in Fig. 2b. To better capture the subtle differences in inter-frame actions, more detailed topological information of the distal joints in the human skeleton is incorporated. Referring to the construction method of the neighborhood node topological matrix, a similar learnable matrix \(\mathscr {A}_{end}\) is set up to allow \(\mathscr {A}\) and \(\mathscr {A}_{end}\) to learn their adaptive node-related weight information along the frame dimension. These are then input in parallel into the network module for graph convolution operations, and the output results are concatenated:

$$\begin{aligned} \begin{aligned} F_{out}=\left\| \mathscr {A}_{k}XW_{k},(k\in K,K=\mathscr {A}\vee \mathscr {A}_{end})\right\| \end{aligned}\end{aligned}$$

(6)

where \(\mathscr {A}_{k}\) represents the k-th subset after the fusion topological structure, and \(W_k\) denotes the trainable parameters for each topological subset. This deepens the network’s focus and understanding of the motion information of distal nodes based on the aggregation of the overall skeleton information, thereby extending the feature to the frame level. As a result, it expresses the different spatial node topological information between motion frames, which facilitates the the learning of subtle differences across frames.

In addition, considering the motion correlation between consecutive motion frames, a ConvLSTM30 network layer is employed to predict the topological relationships. This approach enables the connection weights between nodes at different time instances to vary, thereby more effectively capturing the interdependencies of actions. Subsequently, the predicted temporal topology matrix is combined with the manually constructed fusion topology matrix to derive a new learnable matrix, with the output presented as follows::

$$\begin{aligned} \begin{aligned} \mathscr {A}^{{\prime }}=softmax\left[\sigma \left(convLSTM\left(X^{T}\right)\right)+\mathscr {A}\right] \end{aligned}\end{aligned}$$

(7)

where X is the input feature, \(\sigma\) is the activation function, \(\mathscr {A}\) is the initial topological matrix formed by \(g_{sc}\) and \(g_{fc}\).

In summary, the overall formula for graph convolution is:

$$\begin{aligned} \begin{aligned} H^{(l)}=\sigma \left(D^{-\frac{1}{2}}A^{{\prime }}D^{-\frac{1}{2}}H^{(l-1)}W^{(l-1)}\right) \end{aligned}\end{aligned}$$

(8)

where D is the diagonal matrix, H is the joint action features, and W represents the learnable weight parameters.

Hierarchical temporal convolution attention module (HTCA)

Branch temporal convolution

To extract information from actions of varying durations, this paper introduces a multi-level information extraction module consisting of four different Branch Temporal Convolutions (BTC). The specific module construction and parameter settings of each BTC are shown in Fig. 3, where Dil denotes the null convolution step size and MP represents the kernel size of max pooling. Specifically, this approach splits the input into four branches based on convolution kernel size, with each branch comprising two dilated convolutions, one convolutional long short-term memory network (ConvLSTM), and one max pooling operation. In the design of the branch network, dilated convolution expands the receptive field within a consistent network structure. ConvLSTM captures long-term dependencies across motion frames, boosting the network’s ability to learn from extended sequences. Additionally, the max pooling operation prioritizes nodes with the largest displacement amplitudes, allowing the network to learn more detailed motion features.

Components of the BTC module and specific parameter settings. (a) The BTC module, consisting of four branches, primarily comprising Dilation Temporal Convolution (DTC), ConvLSTM, and MaxPool components. The information within each branch is concatenated to form the output of that branch module. (b) Specific parameter design of convolution kernels for different BTC Modules. Convolution with different scales effectively ensures the ability of network to acquire a wider range of contextual information.

Attention aggregation module

To enable the network to pay more attention to the joint part which is richer in motion information, this paper integrates and extracts the information from different branches through the Attention Aggregation Module (AAM), as shown in Fig. 2c. Firstly, after splicing the output information of each branch, the local patterns and spatial relations of the data are captured through the initial convolution process, serving as the input X to the module. Secondly, a shared weight pool is employed through the multilayer deep convolution to derive T, C, and R as the target, context, and result in the motion evalution, respectively. Subsequently, the aggregated information is obtained through the following process:

$$\begin{aligned} X_{node}=softmax\left(\frac{T\cdot \textrm{C}^{T}}{\sqrt{d}}\right)\cdot {Conv}(R+\mathfrak {J}(R)), \end{aligned}$$

(9)

where d is the normalization constant, and \(\mathfrak {J}\) is an activation function that processes the time stride, consisting of both nonlinear and linear activation. To accelerate network convergence and effectively balance local and global contextual features, we introduce a residual connection as a compensation for initial input X:

$$\begin{aligned} \Delta x=Conv(\mathfrak {L}(Res(x)), \end{aligned}$$

(10)

where \(\mathfrak {L}\) is the reshape operation. Finally, these two components are summed to produce the ultimate output \(X^{\prime }\):

$$\begin{aligned} X^{\prime }=X_{node}+\Delta x, \end{aligned}$$

(11)

The integration of the AAM with the residual module enhances the network’s robustness to noise while enabling finer-grained focus on high-amplitude motion information. Consequently, this approach effectively improves the network’s ability to assess the rehabilitation quality of subtle movements. The overall process of FTF-HGCN is presented in Algorithm 1.

The entire process of FTF-HGCN.

link